UPDATE 12.12.2020: Beech Tree Damage Analysis in the Historical Parks – South-West of Berlin

UPDATE 12.12.2020:

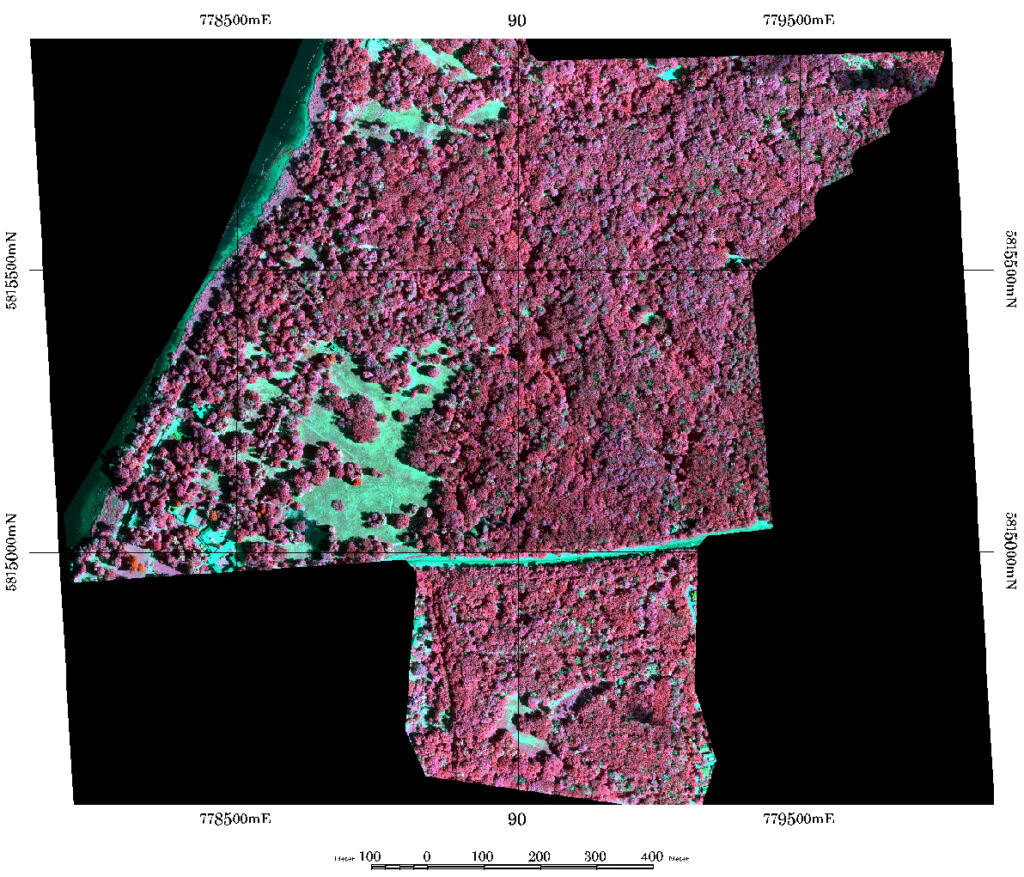

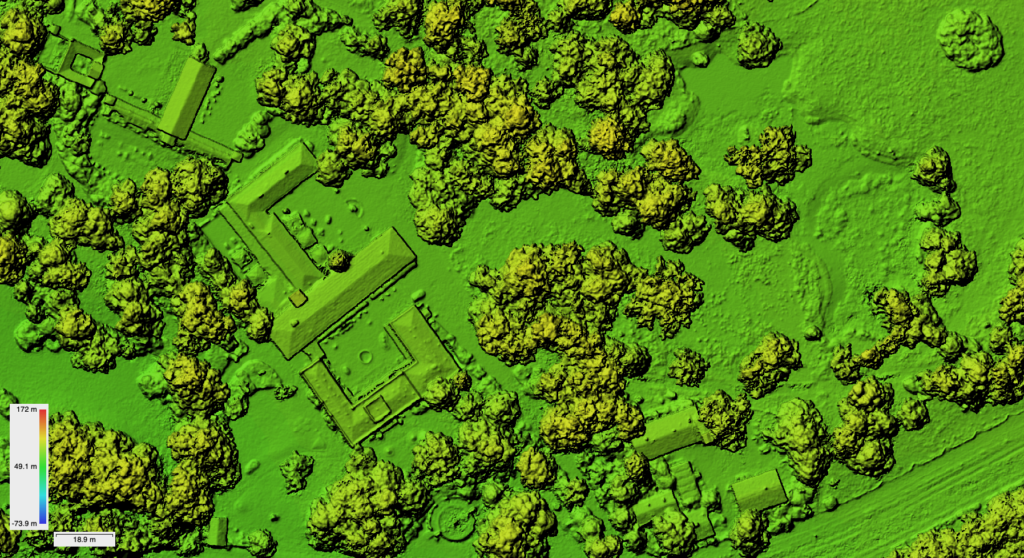

First classification within the SPSG park regions of four different damage categories was done in December 2020 using the TensorFlow CNN implementation. The first test area is the landscape park area “Glienicke” in the south-west of Berlin. The area shows a severe degradation of beech trees on some locations and is as of now not open for the public anymore.

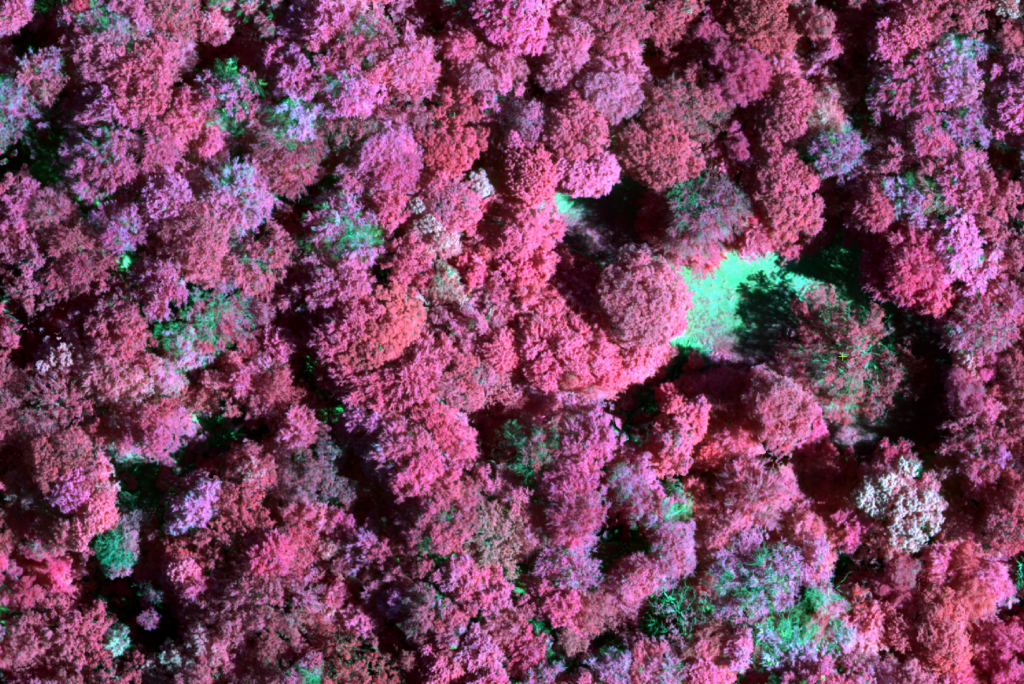

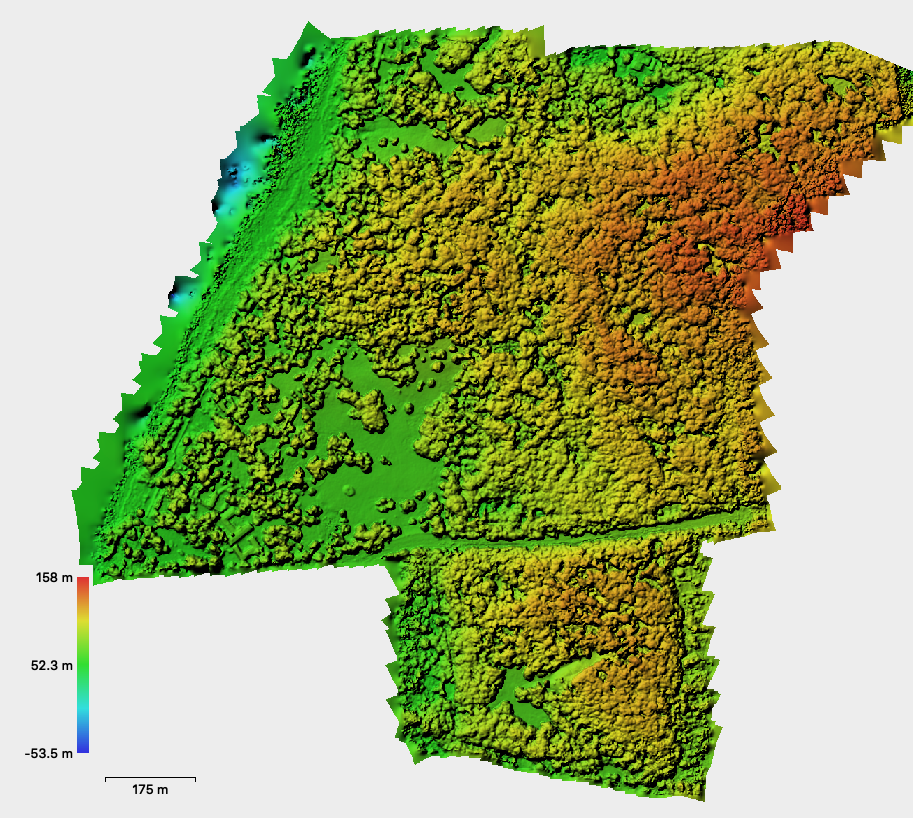

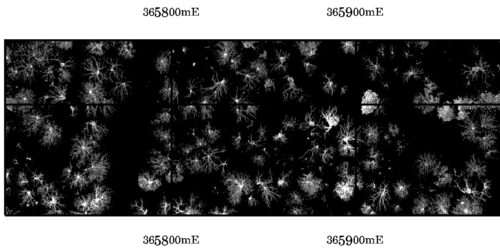

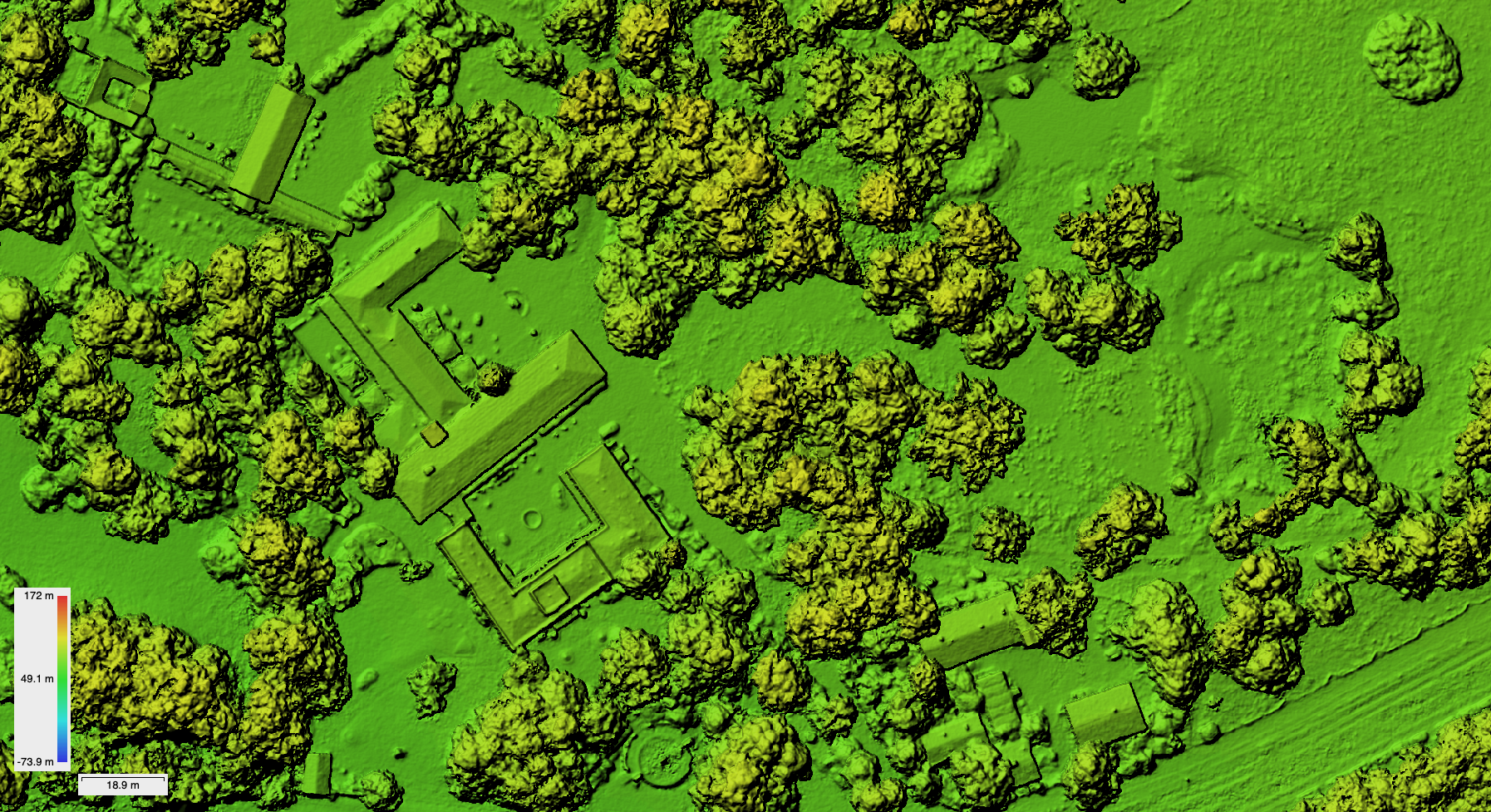

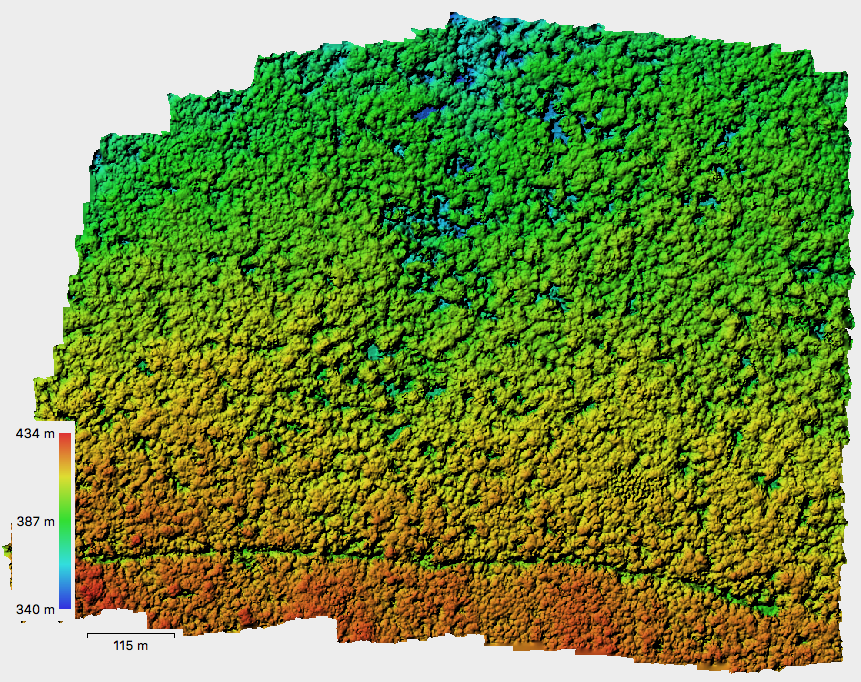

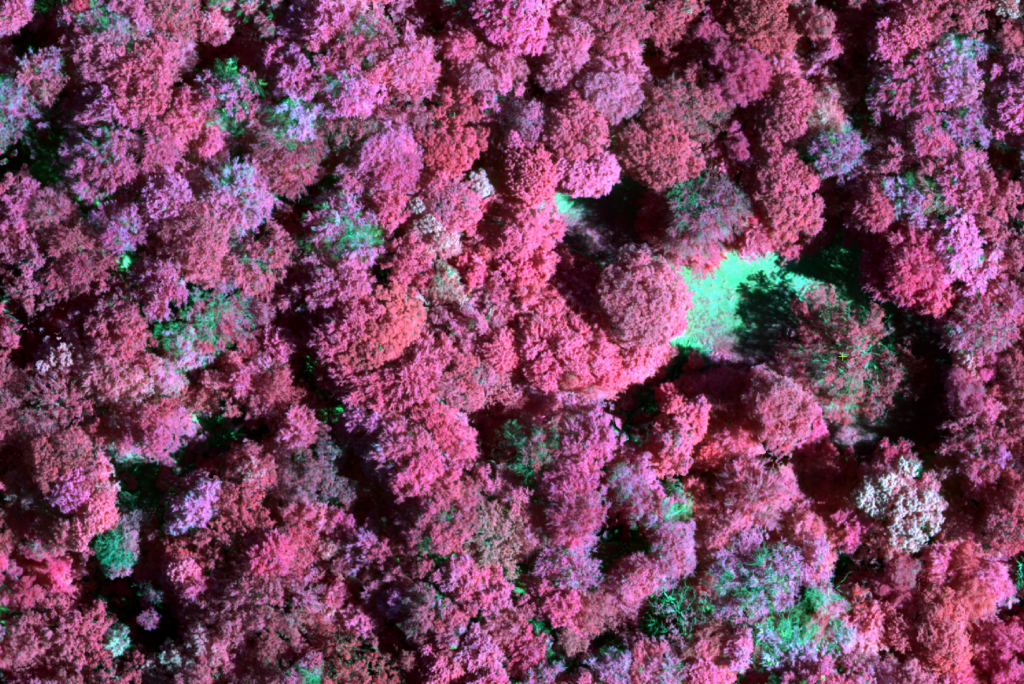

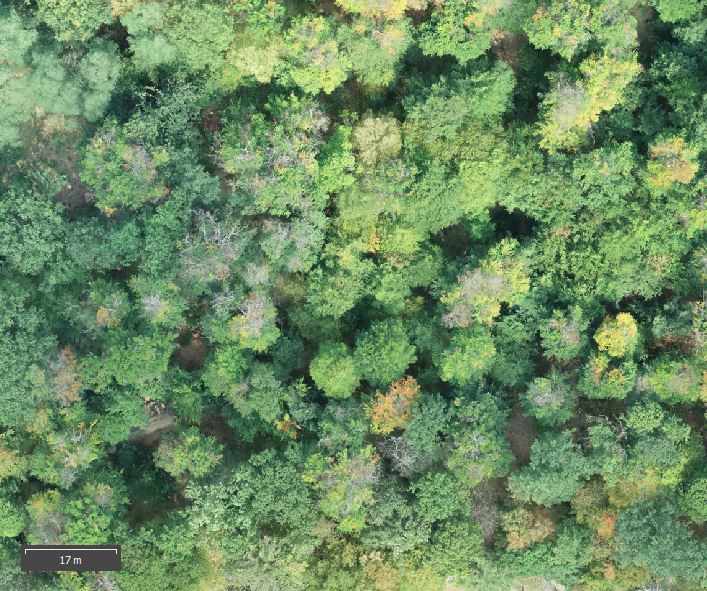

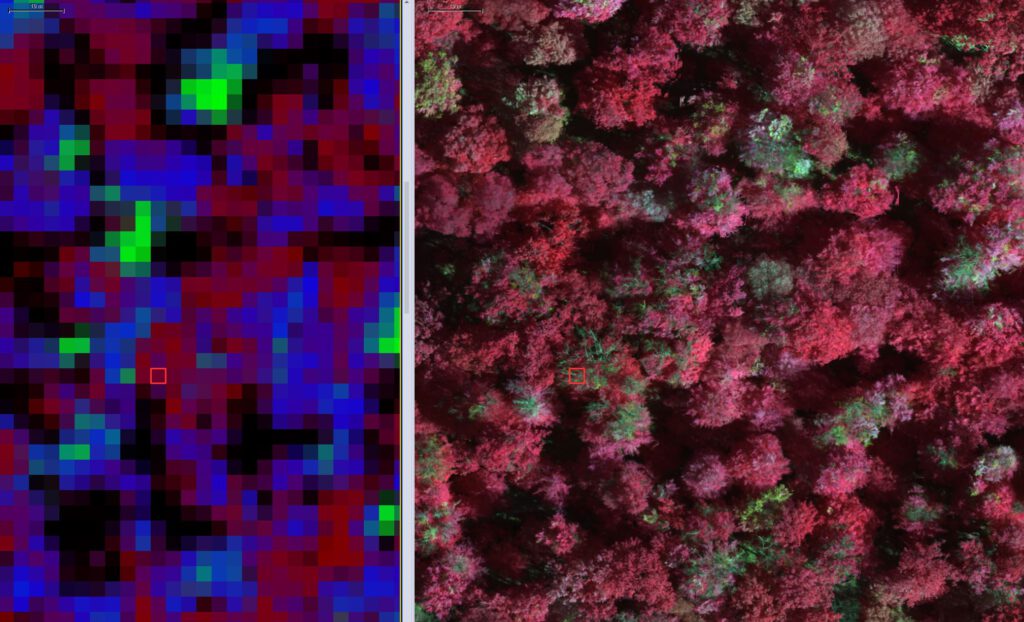

This work uses Phantom 4 RTK and Phantom 4 Multispectral data captured in mid June 2020 with RTK georeferencing (Berlin SAPOS service). Overall 1179 P4M multispectral tiff images with 5 channels were captured and 775 P4R RGB-images as jpegs were captured. Data from both sensors was processed to digital surface models (DSM) with 10 cm spatial resolution and true ortho image mosaics (TOM) with 4 cm spatial resolution (P4R) and 8 cm spatial resolution (P4M) using Agisoft Metashape and DJI Terra software. Processing was done with all images in full resolution. All datasets were reprojected to UTM33N ETRS89 (D450) and layerstacked to a multidimensional PCI Pix database file. The information also includes the GNDVI, LCI, NDRE, NDVI, OSAVI ratios automatically processed from the channel data by DJI Terra. The datasets were used to train CNN (Convolutional Neural Network) with 3x3m subset tiles as training data for 4 different damage categories. An individual tree crown delineation algorithm is applied that works mainly on local max detection within the canopy height model. The canopy height model (CHM) is calculated from the DSM and the terrain model (DTM) from LVA Brandenburg. Terrain height is derived from interpolation of Lidar xyz points to a regular 1m raster. Based on the tree crown polygons the pixel based CNN derived membership values for each damage class are evaluated and attributed to non-fuzzy hard class memberships. This is done using a fixed threshold in all heatmap raster layers. The full workflow is implemented in Trimble eCognition.

For these first classification runs the five P4M channels were used and the NDVI ratio.

July 2020:

In summer 2020 weather conditions only allowed a few forenoon flights over the park region but the time windows were large enough to do a full mapping of the 125 ha area. With the P4M this took approx. 2 hours since 5 batteries are needed with 82/82% overlap setting.

For these flights a EDR-4 clearance was approved by BFA and we also informed the HMI nuclear reactor facility that we are doing drone flights. However some people must have observed the start/landing sequence and they aggressively asked me to stop spying the neighborhood. There is – as always – low understanding for the potential applications of drone/UAV data in general in the public. Perception is mainly focused on the surveillance aspect or military applications and not on the potential for environmental ecological monitoring and modeling applications. Some more in depth information quickly calmed down the situation and being open minded and showing details of flight plan, allowances, contracts and insurance documentation and applicational ideas in the context of the beech tree dying in this area made transparent that this was (also for the public) a useful activity and the person quickly changed its attitude.

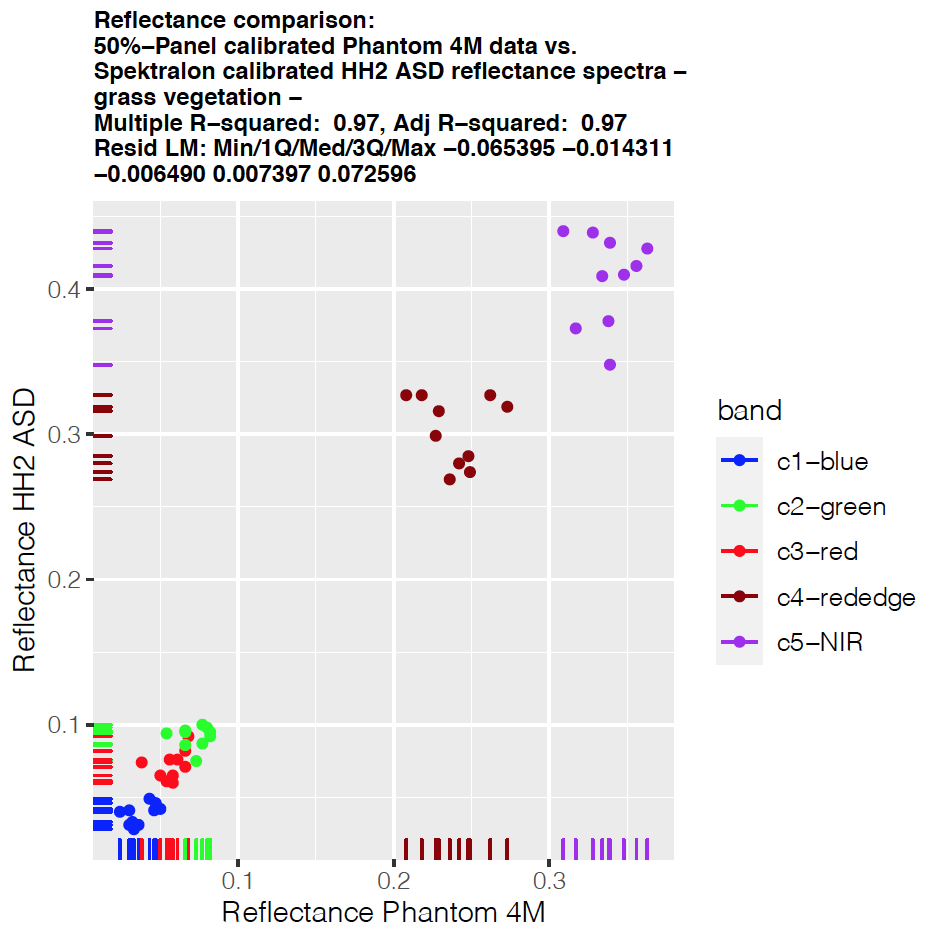

Surface model and point cloud model processing was done in Agisoft Metashape – whereas the P4M multispectral data was processed with DJI Terra. Somehow Terra works better with the P4M data. It seems to utilize the sun illumination sensor data and the ortho mosaic was much better color balanced. However there is basically no documentation about how Terra processed the data. Reference reflectance measures are taken from concrete surfaces but we also measured a Micasense 50% reflectance panel. Processing of the reflectance panel data was not possible within the Agisoft workflow. Somehow the reflectance values are totally offset. We are working with Agisoft support on that right now – but so far it seems as if Agisoft does not use the Illumination information at all and the reflectance panel data might be misread.